Resolving AI agent context limits is the next aim for engineering leaders trying to guarantee better software output. While today’s generative models write excellent code snippets, they regularly fail at long-horizon tasks.

As the amount of information fed into a model grows, its ability to attend to that information degrades. The tech community calls this area of degraded attention the “Dumb Zone”. When a system enters this zone, performance drops, leading to stalled deployment pipelines and wasted compute.

Building a system that aids software engineering requires balancing broad planning with narrow, executable actions. Software engineering resembles an open-ended game demanding both strategy and tactics. Remembering a specific bash command is a simple tactical action, whereas designing a backwards-compatible database schema requires long-term planning. To succeed, the system must integrate new information discovered throughout the task without losing track of the overall goal.

Consider a scenario where a financial institution tasks an AI with refactoring a legacy billing application. The software must retain core business rules while navigating hundreds of file changes. A memory failure mid-task introduces compliance risks by dropping initial data governance requirements, invalidating the automated work entirely.

How AI agent context limits impact software output

Existing architecture attempts to solve these problems but forces engineering teams to accept major trade-offs. One common method relies on compaction, where the system periodically drops irrelevant context. However, this compression is not deterministically lossy, meaning it can unpredictably lose important information.

Another approach delegates tasks to isolated subagents. Because these subagents operate in their own silos, they rely on message passing to synchronise with the main system. Other platforms follow a pattern where a high-level planner delegates to a lower-level executor, compresses the results, and returns them to the main agent. Compressing data at every handoff risks dropping key state details.

To overcome traditional AI context limits Random Labs has just released an agent named Slate, designed to orchestrate massive swarms of subagents directly through a code environment.

This swarm-native architecture operates more like a hive mind, synchronising many parallel threads. It does not rely on message passing between subagents. Instead of static plans, Slate relies on a central orchestration agent that dispatches bounded worker threads. A thread executes exactly one action and then pauses, handing control back to the main thread.

The platform automatically selects the right model for the job, helping teams spend as little as needed for completeness. Enterprise teams can converse with Claude while generating code with Codex. The system programmatically coordinates various models, including Sonnet, Codex 5.3, and GLM 5. GLM proves particularly effective for agentic search operations.

Rather than passing messages back-and-forth, every thread action generates a compressed representation of its step history. This compressed representation is called an episode. These episodes form a tractable episodic memory by retaining only the tool calls that contribute to success. The orchestrator retains the important results, bypassing the usual AI agent context limits that plague long-running tasks, instead of retaining the full tactical trace of every step taken.

This framework maps directly onto traditional operating system designs. The main language model acts as the central processing unit and operating system kernel, managing context like random access memory. The Slate architecture mirrors this setup, where each thread has its own memory space. The development team originally called these threads “actors”, drawing conceptual inspiration from the BEAM virtual machine.

The developers noted that real software tasks naturally decompose into parallel thread workstreams. The orchestrator can dispatch several threads simultaneously and synthesise their episodes before continuing. This parallel execution proves faster than sequential step-by-step agents. Reusing subthreads also maximises caching, which keeps computational costs low despite the swarm approach.

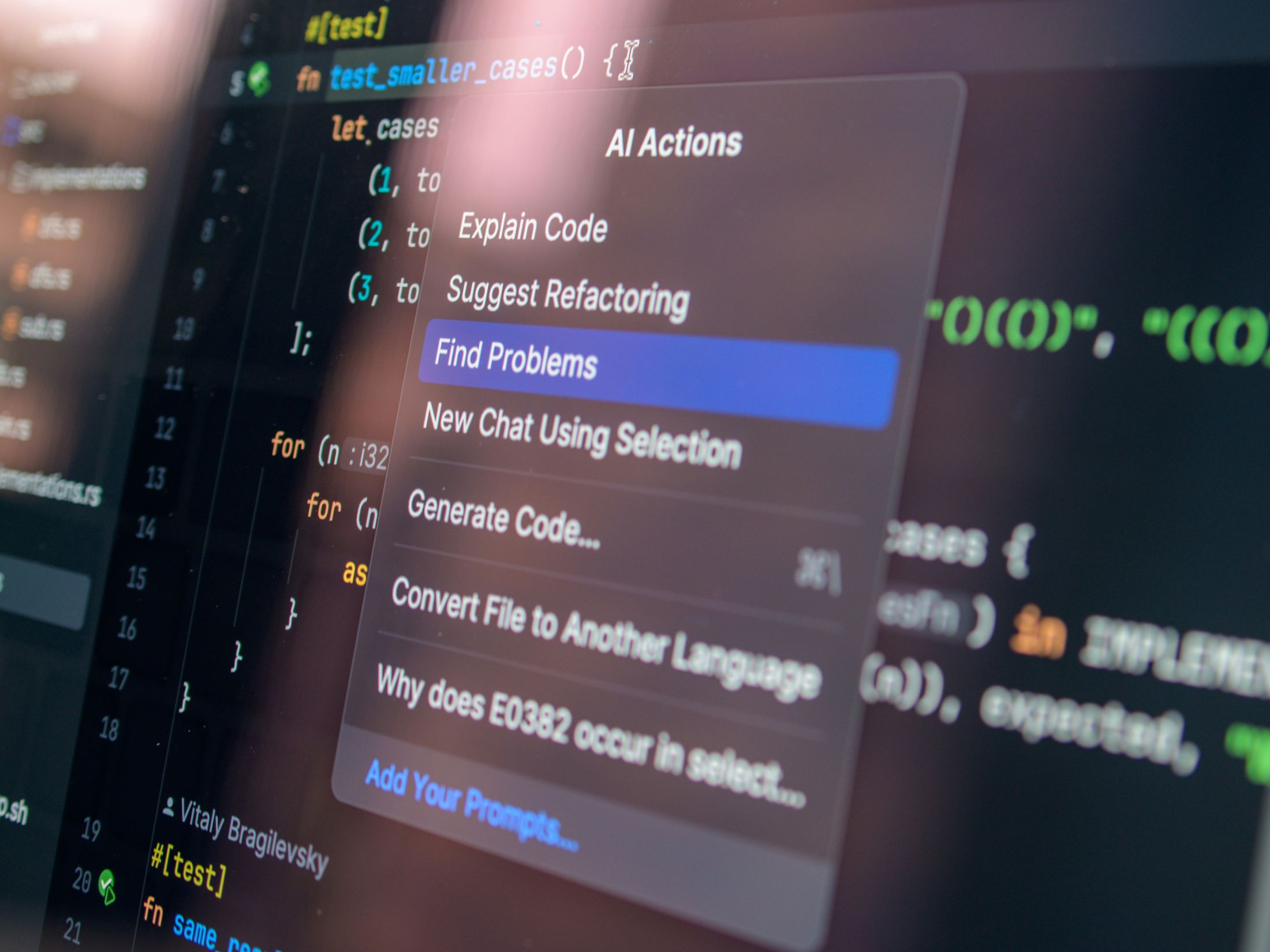

By coordinating the swarm using a typescript domain-specific language, the system accesses the model’s latent strategic knowledge separately from its tactical execution. Teams looking to push past traditional AI context limits and improve their engineering workflows can test this agent via the open beta available today.

See also: Rakuten: Coding agents accelerate developers’ incident response

Want to learn more about AI and big data from industry leaders? Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is part of TechEx and is co-located with other leading technology events including the Cyber Security & Cloud Expo. Click here for more information.

Developer is powered by TechForge Media. Explore other upcoming enterprise technology events and webinars here.